Enabling Multilingual Neural Machine Translation with TensorFlow

At the recent TensorFlow meetup, attendees learnt about some distinct patterns to use, as well as found out how to contribute one of their own. Furthermore, an engineer of the Google Brain team explored how to improve conventional machine translation with TensorFlow.

Software patterns in TensorFlow

In his session, Garrett Smith of Guild AI explored the challenge imposed by TensorFlow’s flexibility to discover and apply effective software patterns from the variety offered by the library. Having worked with dozens of TensorFlow projects, he discussed what patterns do work and what don’t.

Thus, Garrett exemplified function-defined globals—a pattern, which is supposed to improve the readability and maintainability of a script in two ways:

- Concede that TensorFlow scripts a program by side-effects

- Formalize the definition of a global state using functions and Python’s

globalstatement

This pattern provides support for those smaller functions that are easier to modify, enables efficient code reuse, and eliminates the need to use sophisticated state definitions when documenting shared state.

Garrett demonstrated how to apply the function-defined globals pattern to a MNIST data set. You can check out the source code of his example.

Finally, he outlined some guidelines and acceptance criteria for those eager to submit a TensorFlow pattern of their own.

You may browse through the Garret’s slides from the meetup.

Automating phrase-based machine translation

Xiaobing Liu from the Google Brain team gave insights into applying machine learning techniques to automate translation systems and enhance conventional phrase-based machine translation (PBMT).

Using sequence-to-sequence models brings along the need to carry all data in an internal state, and it takes time. Attention models—underlying the brain neural machine translation (BNMT)—address this issue by providing access to all encoder states, enabling translation independent of a sentence’s length.

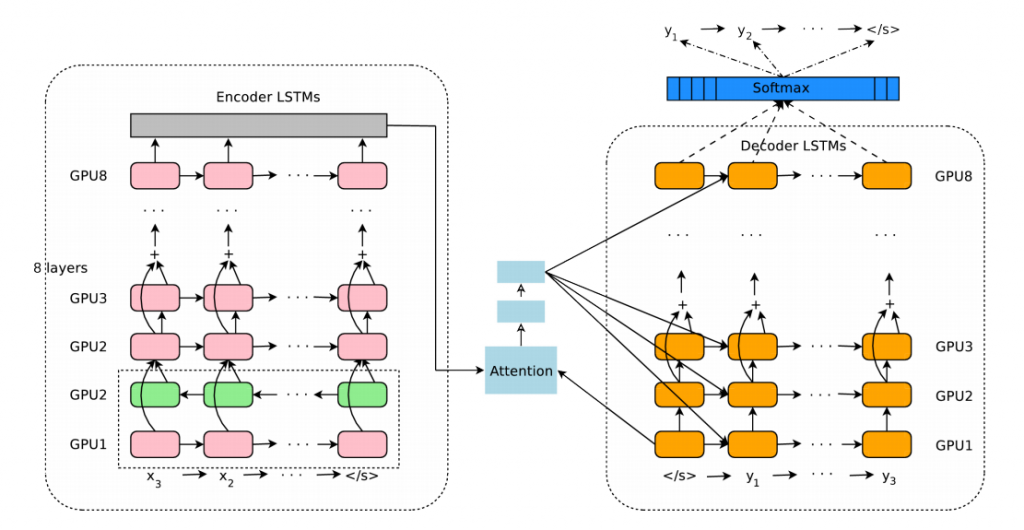

BNMT model architecture (Image credit)

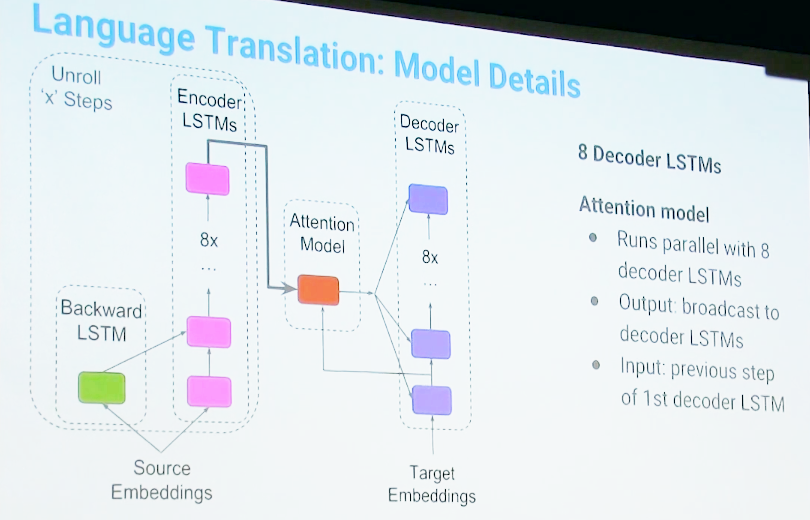

BNMT model architecture (Image credit)Xiaobing detailed how a sample attention model works:

- Runs eight decoder long short-term memory networks (LSTMs) in parallel

- Output broadcast to decoder LSTMs

- Input: previous step of the first decoder LSTM

To train such a model, it takes around 100 GPUs (12 replicas, eight GPUs each), and the training time takes approximately a week for 2.5 million steps, which is equal to around 300 million sentence pairs.

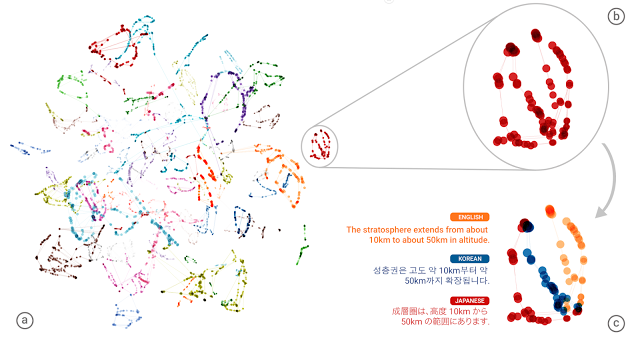

The Google Brain team also experimented with translating between a language pair which the system has never seen before (Korean and Japanese), which resulted in the “zero-shot” translation. However, it triggered another question whether “the system was learning a common representation, in which sentences with the same meaning are represented in similar ways regardless of the language.” By making a 3-dimensional representation of internal network data, the team was able to see if the system translates a set of sentences between all possible pairs of the Japanese, Korean, and English languages.

A 3D representation of a multilingual model with TensorFlow

A 3D representation of a multilingual model with TensorFlowWhile decoding speed used to be a major blocker, tensor processing units (TPUs) and quantization come to a rescue, also improving quality on the way.

Xiaobing then shared the experience of enabling a multilingual model. The Google team tried training several language pairs in a single model and touched upon the challenges faced:

- early cutoff—dropping some words in a source sentence

- broken dates/numbers

- short rare queries

- transliteration of names

- junk

To learn more about Google’s experiments in this field, you can read about their neural machine translation system or zero-shot translation. You may also be interested in a research on bridging the gap between human and machine translation.

Join our group to stay tuned with the upcoming events.

Want details? Watch the video!

Further reading

- The Magic Behind Google Translate: Sequence-to-Sequence Models and TensorFlow

- How TensorFlow Can Help to Perform Natural Language Processing Tasks

- Using Long Short-Term Memory Networks and TensorFlow for Image Captioning

About the speakers

Garrett Smith is a founder of Guild AI, an open-source toolkit that helps developers to gain insight into their TensorFlow experiments. He has 20+ years of software development experience and has managed teams across a wide range of product development efforts. Garrett has expertise in operations and building reliable, distributed back-end systems. Prior to founding Guild AI, Garrett led the CloudBees PaaS division, which hosted hundreds of thousands of Java apps at scale. He is a frequent instructor and speaker at software conferences and an active member of the Erlang community, maintaining several prominent open-source projects.

Xiaobing Liu is a senior software engineer at the Google Brain team. In his work, Xiaobing focuses on TensorFlow and some key applications the library could be applied to improve Google products, such as Google Search, Google Translate, etc.