Monitoring and Visualizing TensorFlow Operations in Real Time with Guild AI

Visualizing performance statistics

One of the sessions at TensorBeat 2017 explored the tool that supplements TensorFlow operations through gathering data related to the model’s performance (GPU/CPU usage, memory consumption, and disk I/O). This blog post looks into the capabilities of the solution, as well provides code samples to run commands.

Garrett Smith demonstrated Guild AI—a tool with a focus on the operational issues of training and serving TensorFlow models.

The core features of this project include:

- Real-time updates on training. One can monitor how model training proceeds in real time, while getting up-to-the-second feedback. With underlying intelligent polling and a high-performance embedded database, the tool allows for minimizing the impact on your system.

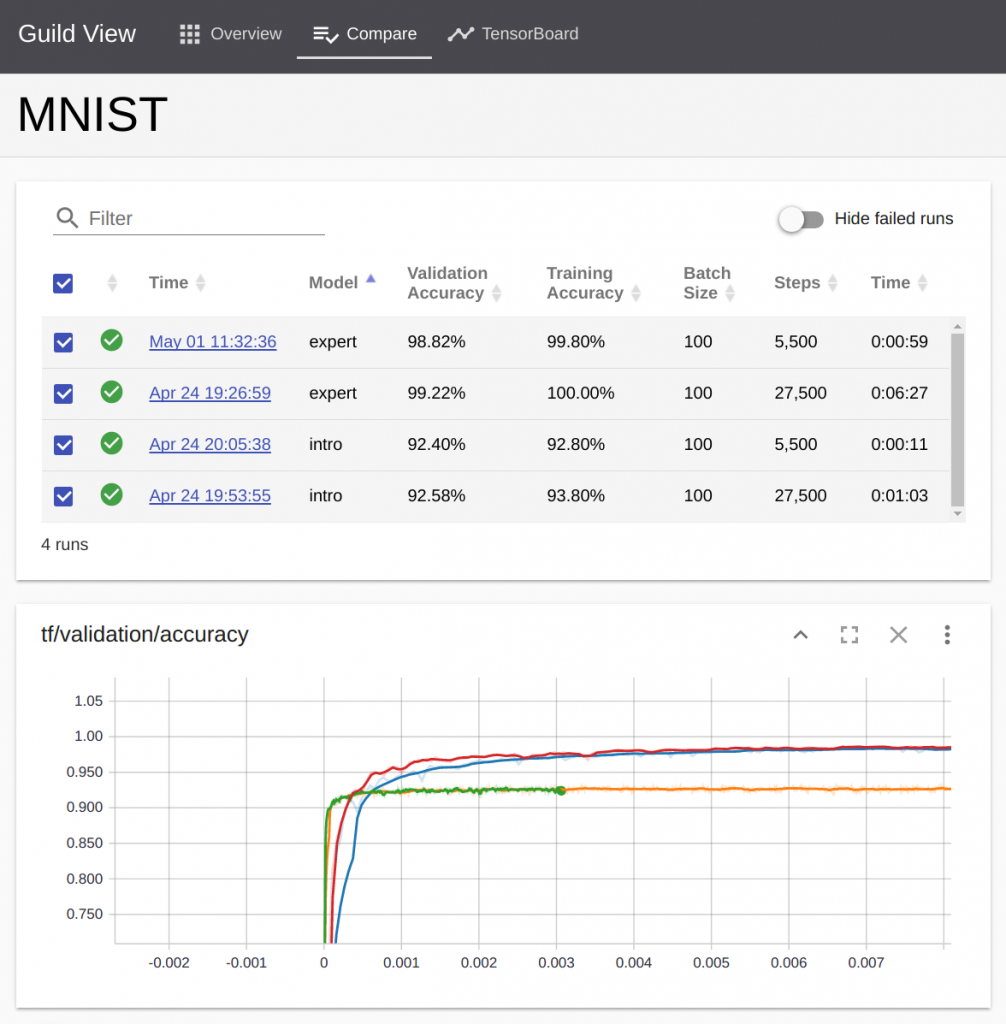

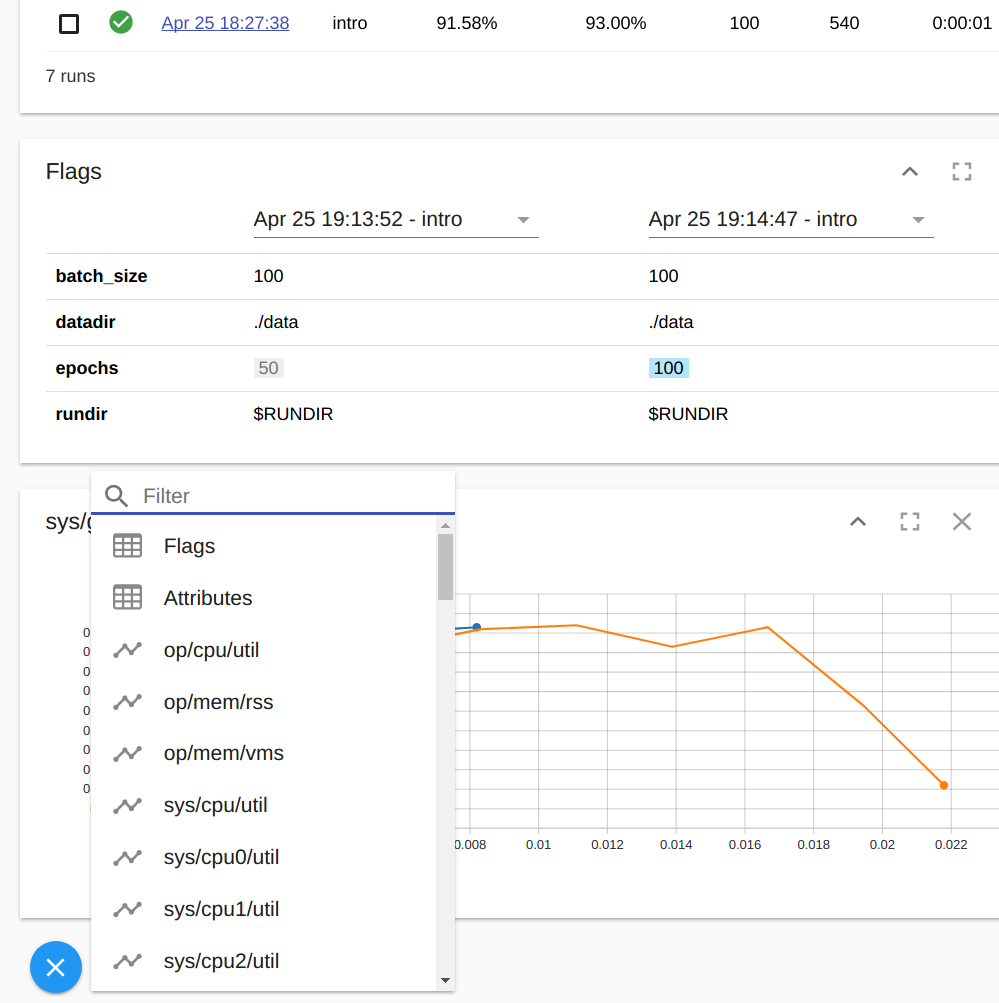

- Comparison and analysis. The tool summarizes important run results, illustrates them in a filterable table in real time, and compares against the previous runs. Furthermore, one can compare TensorFlow scalars and the system’s statistics. With the integrated TensorBoard’s charting component, users can compare series data by global step, relative time, and wall time. It also provides support for series smoothing and logarithmic Y axis.

- Advanced data gathering. In addition to TensorFlow event logs, one can collect a wider range of statistics: script output, command flags, or system attributes and statuses (e.g., GPU or CPU utilization).

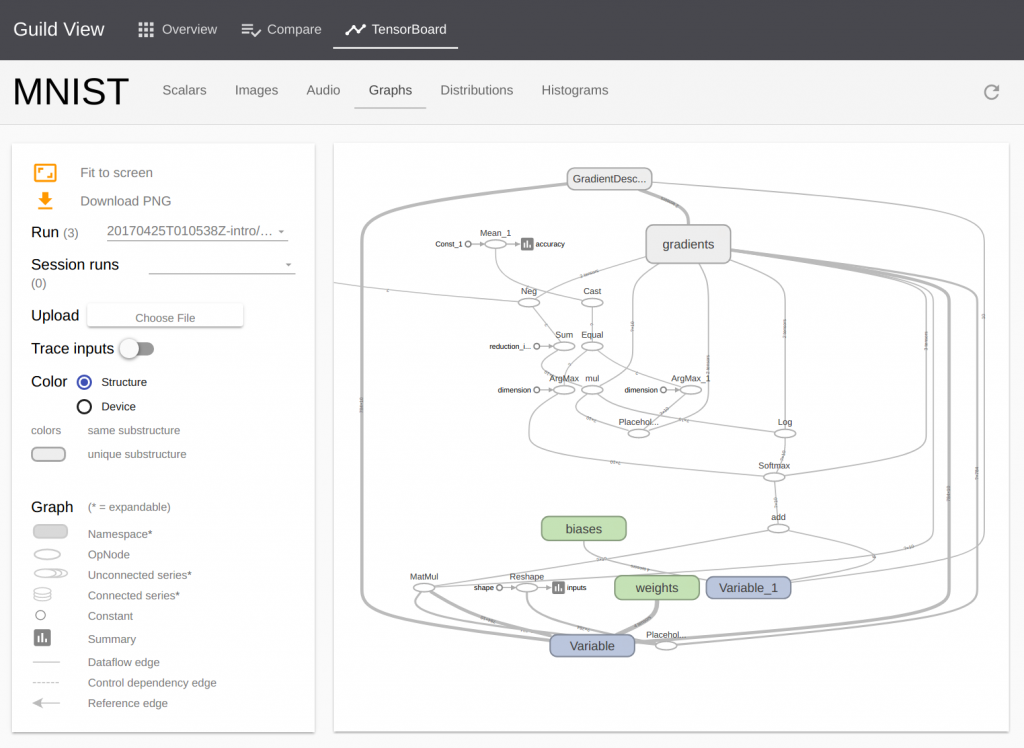

- TensorBoard integration. It eliminates the need to start a separate TensorBoard process, as the tool handles it in the background upon running the

viewcommand. The integration is also responsible for proxying requests and bundling TensorBoard Polymer components. - Simplified training workflow comprising four commands:

prepare,train,evaluate, andserve. The tool fills in the details for each command via the project file. - Self-documenting project structure. Projects are text files easy to read, while providing instructions to perform the

prepare,train, andevaluateoperations. - Integrated HTTP server to test the trained models before deploying them to TensorFlow serving.

Image credit

Image credit Image credit

Image credit Image credit

Image credit Image credit

Image creditGuild AI has an indexed database that stores run series data with a standard SQLite interface. There are also data collectors responsible for gathering TensorFlow events, system statistics (based on the psutil module with customization applied), and GPU statistics (based on nvidia-smi). The tool utilizes Polymer web components, as well.

The code behind

Garrett then demonstrated how things actually work on the code level.

Defining flags:

parser = argparse.ArgumentParser()

parser.add_argument("--datadir", default="/tmp/MNIST_data",)

parser.add_argument("--rundir", default="/tmp/MNIST_train")

parser.add_argument("--batch_size", type=int, default=100)

parser.add_argument("--epochs", type=int, default=10)

parser.add_argument("--prepare", action="store_true", dest='just_data')

parser.add_argument("--test", action="store_true")

FLAGS, _ = parser.parse_known_args()

Using flags:

steps = (NUM_EXAMPLES // FLAGS.batch_size) * FLAGS.epochs

for step in range(steps + 1):

images, labels = mnist.train.next_batch(FLAGS.batch_size)

batch = {x: images, y_: labels}

sess.run(train_op, batch)

Using rundir. Specified as a command line option and environment variable, rundir locates all the run artifacts. (Note: Scripts should be modified to use this value.)

train = tf.summary.FileWriter(FLAGS.rundir + "/train")

validation = tf.summary.FileWriter(FLAGS.rundir + "/validation")

tf.gfile.MakeDirs(FLAGS.rundir + "/model")

tf.train.Saver().save(sess, FLAGS.rundir + "/model/export")

saver = tf.train.import_meta_graph(

FLAGS.rundir + "/model/export.meta")

saver.restore(sess, FLAGS.rundir + "/model/export")

The roadmap

In the future, it is planned to enhance visualization capabilities of the tool, as well further improve analytical and comparative algorithms. The project’s developers also want to deliver plugin exchange with TensorBoard and build a “model zoo” with more examples available. You can check out Guild AI’s GitHub repo for details or browse through Garrett’s presentation.

Join our group to stay tuned with the upcoming events.

Want details? Watch the video!

Further reading

- Visualizing TensorFlow Graphs with TensorBoard

- TensorFlow in Action: TensorBoard, Training a Model, and Deep Q-learning

- Logical Graphs: Native Control Flow Operations in TensorFlow

- TensorFlow in the Cloud: Accelerating Resources with Elastic GPUs

About the expert

Garrett Smith is a founder of Guild AI, an open-source toolkit that helps developers to gain insight into their TensorFlow experiments. He has 20+ years of software development experience and has managed teams across a wide range of product development efforts. Garrett has expertise in building reliable, distributed back-end systems. Prior to founding Guild AI, he led CloudBees PaaS division, which hosted hundreds of thousands of Java applications at scale. Garrett is a frequent instructor, as well as speaker at software conferences and an active member of the Erlang community, maintaining several prominent open-source projects.